A Full KingEssays Process Audit of 3 Orders and Real Writer Interactions

This is not a summary review. This is a controlled test of how KingEssays behaves across three real orders, tracked step by step from the moment the task is submitted to the final revision stage.

Quick Pros & Cons

| Pros | Cons |

|---|---|

| Fast order processing with no friction in the workflow | No built-in mechanism to refine weak or vague instructions |

| Writers respond quickly, even under short deadlines | Fast responses do not always indicate strong academic reasoning |

| Clear improvement potential through structured revision | Revisions depend heavily on how specific the request is |

| Flexible pricing with consistent cost per 100 words | |

| Wide writer pool with different specialization signals | |

| Works well when treated as a guided writing workflow |

Instead of describing the platform in general terms, I documented the entire process as a single buyer: how I placed each order, which writer I selected and why, what exactly was said in chat, what the first draft actually looked like, and what changed after revision.

The goal is not to answer whether the service is "good." The goal is to answer something more useful: What actually happens when you use it — and where exactly the outcome is decided.

Testing Design: Three Controlled Scenarios

To isolate how the system behaves, I used three different order strategies.

| Case | Strategy | Buyer Behavior | What It Tests |

|---|---|---|---|

| Case 1 | Passive Panic | Minimal input, fast decision | Can the system compensate for weak instructions? |

| Case 2 | Structured Buyer | Clear brief, moderate control | What an average user actually gets |

| Case 3 | Controlled Workflow | Detailed instructions, active involvement | Maximum achievable quality |

I kept one variable constant: the evaluator. Same expectations, same standards, same criteria. Only the buyer strategy changed.

Case 1 — Passive Panic (Condensed Process Audit)

This scenario tests the weakest possible user behavior: minimal instructions, urgent deadline, and fast decision-making.

| Stage | Action | Observed Behavior | Analytical Insight |

|---|---|---|---|

| Order Submission | 18:12 1200 words 8-hour deadline $48 Instruction: "Write an essay on social media and academic performance. Use sources." No thesis No structure No constraints | The task is undefined. The system receives no direction to interpret. | |

| Bidding | 18:15–18:20 | Writers: Robert S. (4.9) Jack M. (5.0) Mariam R. (4.9) All responses were fast but generic: "Ready to start" "Can deliver fast" | No writer reduced ambiguity. Speed replaced analysis. |

| Selection | Chosen: Jack M. | Decision based on speed | Typical user behavior under pressure. Not quality-driven. |

| Initial Chat | 18:22 | User requested analytical writing Writer response: "Both positive and negative effects" | No thesis defined. Defaulted to generic structure. |

| Draft Delivery | 01:47 | Delivered before deadline Clean formatting Sources included Readable structure | Surface quality acceptable. Structural quality unknown. |

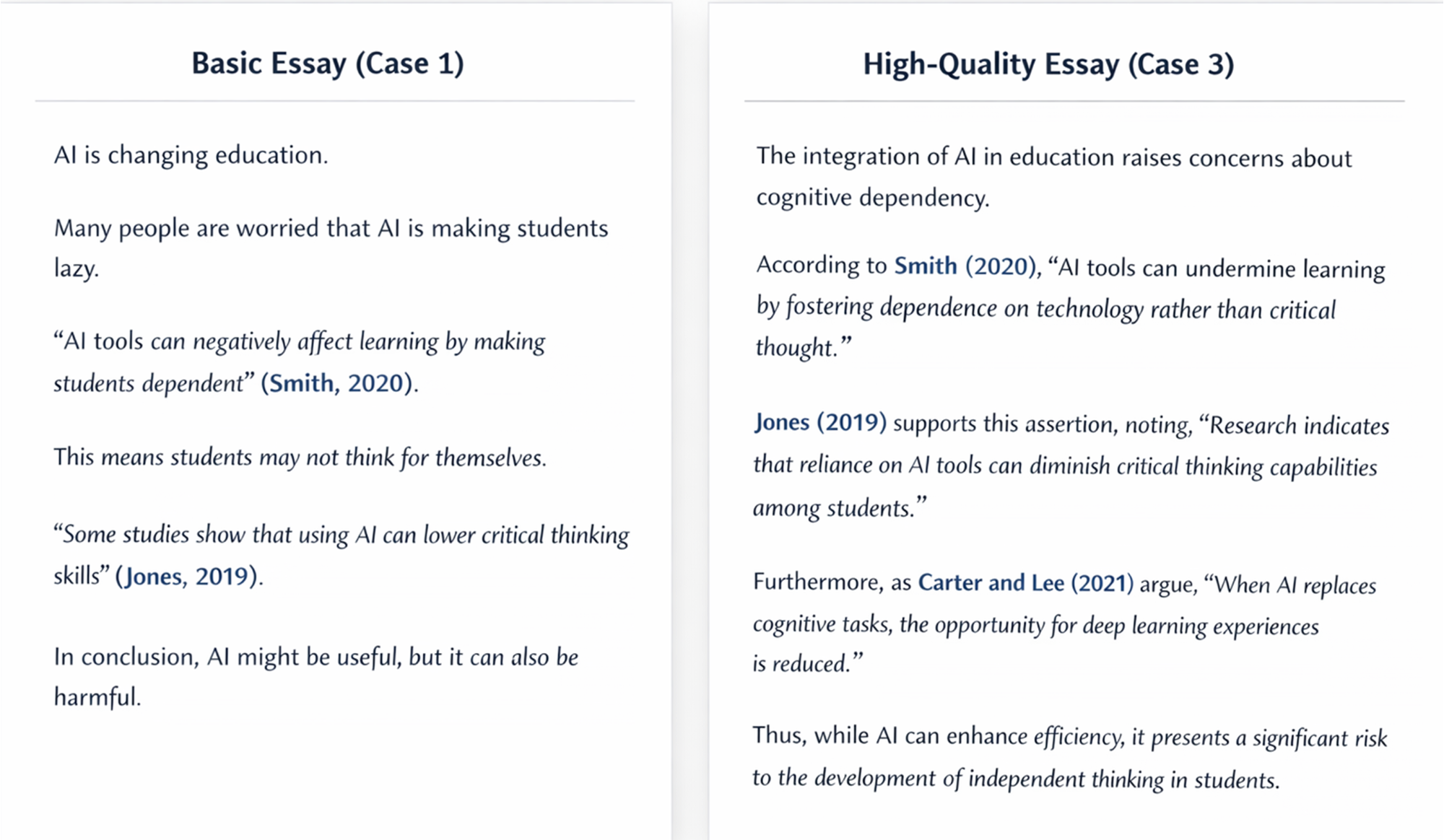

| Structural Analysis | Deep evaluation | Thesis: generic Paragraphs: isolated Sources: confirmatory | No argument control. No cumulative reasoning. |

| Analytical Density | 64 sentences total | 15 analytical 23% | Low academic depth. |

| Revision | 02:10 → 05:02 | Requested: – better thesis – deeper argument +1 source minor expansion | Revision improved wording, not thinking. |

This scenario answers a very specific question: Can the platform compensate for weak, last-minute input?

It delivers readable content quickly. It does not generate strong academic arguments independently. The limitation is structural, not technical. KingEssays executes instructions efficiently — but it does not interpret or improve them.

Case 2 — Structured Buyer (Process Log with Controlled Input)

The second case shifts one key variable: the buyer is no longer passive. The goal here is not perfection. It is realism. This scenario represents a typical student who understands the assignment but does not over-engineer the process.

Question: What happens when the user does a "decent job" — not perfect, but structured?

| Stage | Action | Observed Behavior | Analytical Insight |

|---|---|---|---|

| Order Submission | 14:05 1800 words 3-day deadline $72 Instruction: Argumentative essay with defined criteria and sources Clear structure Defined comparison points Minimum 6 sources | Task is constrained. Ambiguity significantly reduced. | |

| Bidding | 14:07–14:15 | Writers: Ann O. (5.0) Joseph P. (4.9) Tasha B. (4.9) Ann O.: structural question Joseph P.: execution-focused Tasha B.: generic approach | Only one writer reduced ambiguity. Early thinking becomes selection factor. |

| Selection | Chosen: Ann O. | Decision based on analytical question | Selection driven by reasoning, not speed. |

| Clarification | 14:18 | Thesis negotiated Clear position defined: traditional vs flexibility trade-off | Argument established before writing. Risk significantly reduced. |

| Draft Delivery | Day 2 | Delivered early Clear thesis Structured sections 6 sources | Immediate structural improvement vs Case 1. |

| Structural Analysis | Deep evaluation | Logical progression Defined argument Basic source use | Framework is correct, depth still limited. |

| Analytical Density | 82 sentences | 32 analytical 39% | Moderate academic depth. +16% vs Case 1. |

| Weak Points | Conceptual review | Generalized statements Limited nuance | Correct but not precise. Lacks conditional reasoning. |

| Revision | Day 2 | Requested: – counterargument – deeper analysis +2 sources new paragraph added | Revision improved structure, not thinking depth. |

This scenario answers a different question: What happens when the user provides a clear, structured brief? The answer is significantly more positive: the system becomes predictable, the writer engages with the argument, and the result is usable without a full rewrite.

However, one limitation remains: The platform can support structure, but it does not consistently generate deep academic reasoning on its own.

Case 3 — Controlled Workflow (Maximum Input, Maximum Output)

The third case was not designed to be "realistic." It was designed to test the upper boundary of the system. What happens when the buyer does everything correctly? This means: clear thesis before ordering, structured outline, intentional writer selection, active communication, targeted revision.

If the platform can produce strong academic writing, it should happen here.

| Stage | Action | Observed Behavior | Analytical Insight |

|---|---|---|---|

| Pre-Order Preparation | 10:10 Used title generator Created own thesis: AI improves efficiency but weakens learning when replacing cognition | Tool output: generic titles Manual thesis: specific and controlled | Free tools produce safe outputs. Strategic direction must come from the user. |

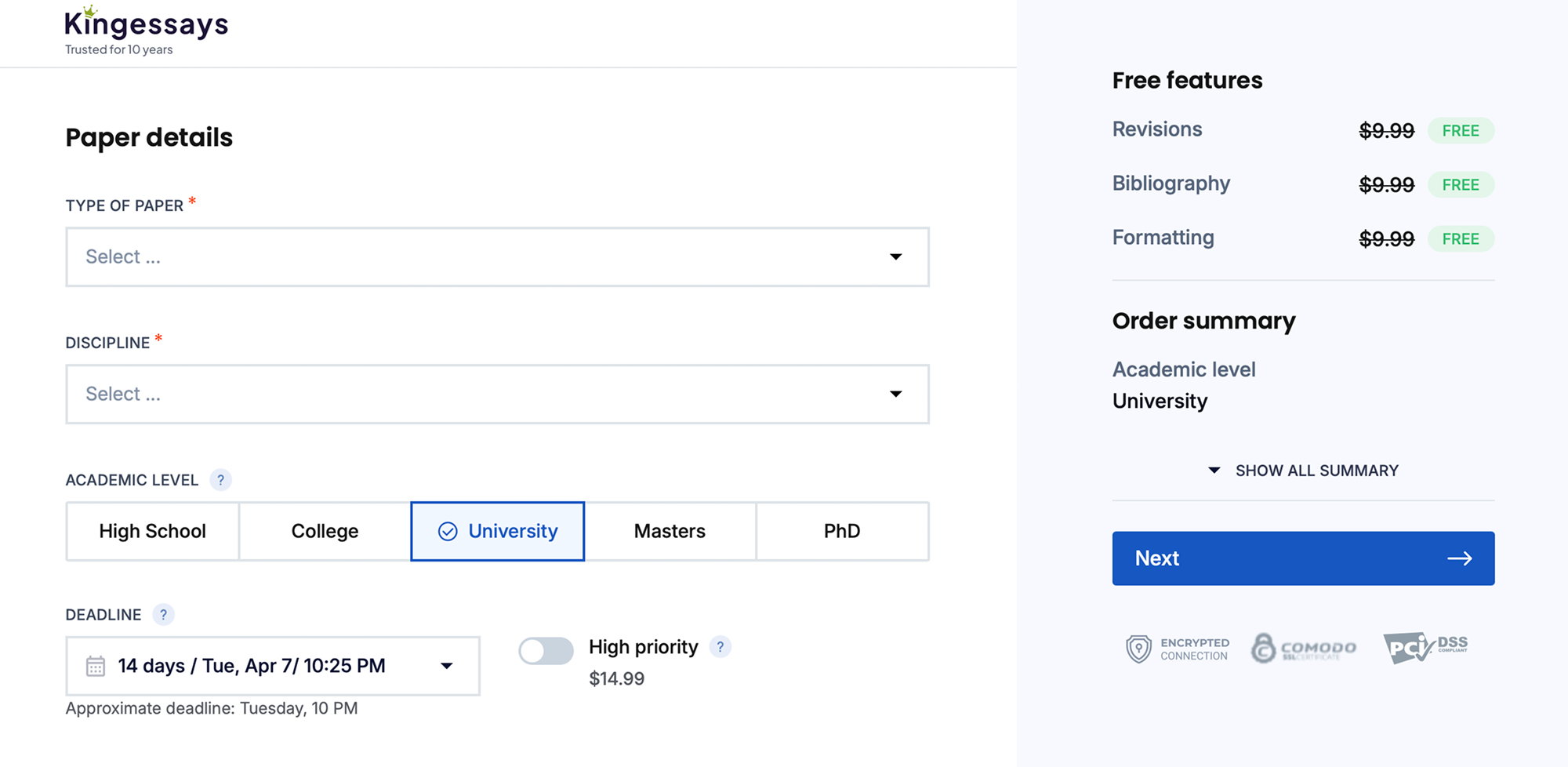

| Order Submission | 10:25 2200 words 7 days $89 Detailed instructions: – thesis – outline – source requirements | Fully constrained task No ambiguity | System receives structured input. Execution becomes predictable. |

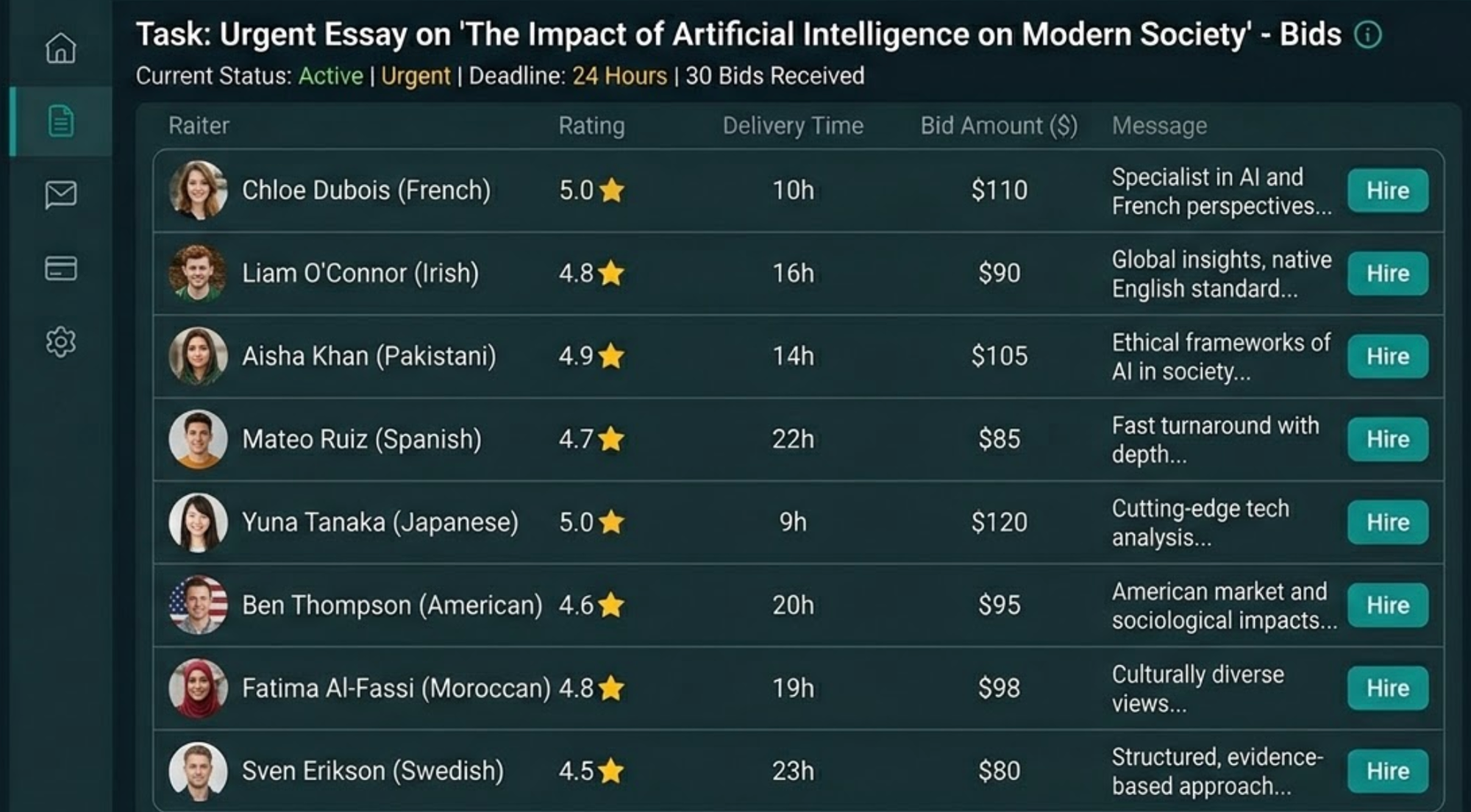

| Writer Selection | 10:30–10:40 | Candidates: Joseph P. Elizabeth F. Lee W. Joseph P. restated argument before starting | Best predictor of quality: ability to interpret, not just execute. |

| Selection | Chosen: Joseph P. | Decision based on conceptual understanding | Shift from speed → reasoning-based selection |

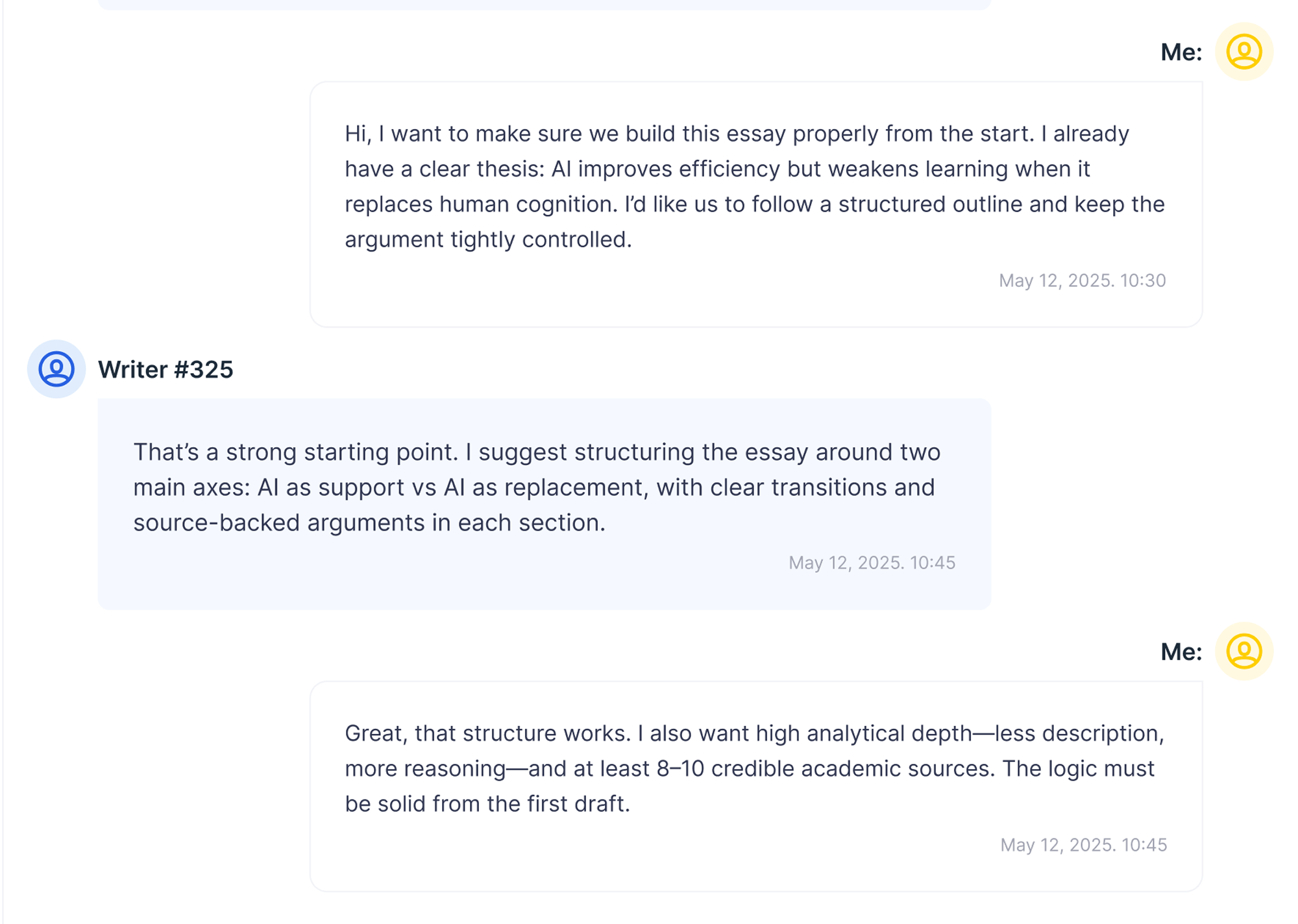

| Deep Chat | 10:45 | Argument clarified Structure defined Writer proposes structure: support vs replacement | Shared model created before writing. Risk minimized. |

| Draft Delivery | Day 5 | Delivered early Clear thesis Strong structure 9 sources | First case with intentional design, not just execution. |

| Structural Analysis | Deep evaluation | Controlled argument Logical progression Source-driven reasoning | Argument built, not described. |

| Analytical Density | 97 sentences | 52 analytical 54% | High academic depth. More than double Case 1. |

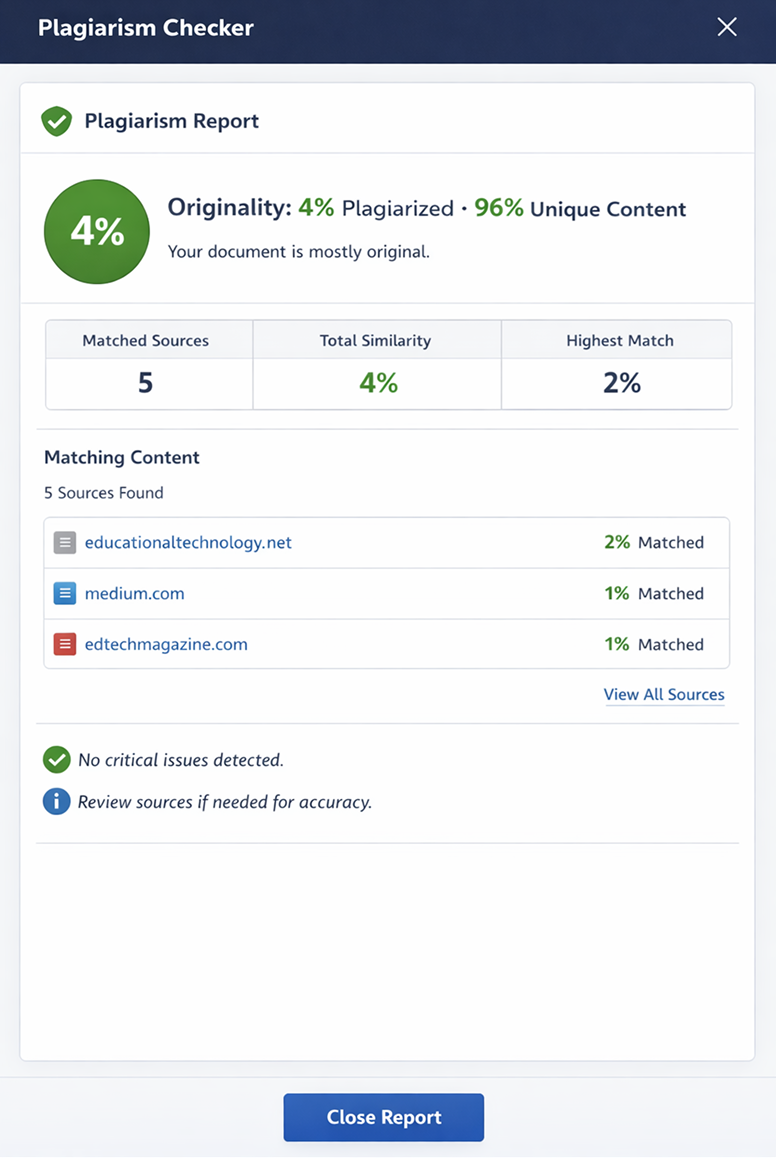

| Plagiarism Tool | Checked draft | Basic interface No detailed breakdown | Tool confirms originality presence, not depth or source quality. |

| Revision | Day 5 | Requested: – real example – better transitions Refinement applied Flow improved | Revision enhances quality, not structure. |

This scenario defines the upper limit of the platform. Strong structure → strong output. Clear argument → high analytical density. Active control → predictable result.

However, one key limitation remains: The system does not create academic quality independently — it amplifies it.

Cross-Case Comparison — How Input Quality Shapes Output

| Factor | Case 1 Passive Panic | Case 2 Structured Buyer | Case 3 Controlled Workflow |

|---|---|---|---|

| Instruction Quality | Minimal, undefined | Structured, but flexible | Fully controlled and constrained |

| Writer Selection Logic | Speed-based | Clarity-based | Interpretation-based |

| Writer Behavior | Reactive | Engaged | Analytical |

| Thesis Formation | Absent | Defined during chat | Predefined and refined |

| Argument Control | None | Moderate | Strong and intentional |

| Source Usage | Confirmatory only | Supportive | Integrated into reasoning |

| Analytical Depth | Low (23%) | Medium (39%) | High (54%) |

| Revision Impact | Surface-level edits | Structural improvement | Refinement only |

| Time Investment | ~5 minutes | ~20 minutes | ~45 minutes |

| Final Quality | 6.5 / 10 | 7.5 / 10 | 8.5 / 10 |

| Submission Readiness | No | Partial | Close to submission-ready |

Key Findings — What Actually Changes Between Cases

The platform does not behave differently — the user does.

Price remained nearly identical across all cases (~$4 per 100 words). Writer ratings were similar (4.9–5.0), but outcomes varied significantly. The most important variables were: instruction clarity, writer selection criteria, and pre-writing communication.

Critical Insight — Where Quality Actually Comes From

Quality is not generated by the platform. It emerges from the interaction between user input and writer interpretation.

Across all three cases, the same system produced three completely different outcomes:

Case 1: readable but generic text. Case 2: structured and usable academic draft. Case 3: controlled, high-quality analytical paper.

Risk Model — Where the System Fails

| Risk Zone | What Happens | Case Where Observed |

|---|---|---|

| Order Stage | Ambiguity is accepted, not corrected | Case 1 |

| Writer Selection | Speed is mistaken for competence | Case 1 |

| Draft Stage | Generic structure replaces argument | Case 1–2 |

| Revision Stage | Surface edits instead of conceptual improvement | Case 1–2 |

| Tool Usage | Free tools give safe but shallow outputs | Case 3 |

When This Service Actually Works

KingEssays is not a plug-and-play academic solution. It is a responsive execution system that depends on user control.

Low input → low depth output. Structured input → usable academic draft. Controlled workflow → strong result.

If you need a high-quality academic paper, the safest approach is not to rely on the platform alone, but to actively shape the process from the start. Otherwise, the system will not fail — but it will not think for you either.

Free Tools vs Real Writing — What Actually Helps (Tested in Practice)

Instead of listing the platform's free tools as features, I tested them inside the actual workflow of the three cases to see whether they change the outcome in a meaningful way.

Title Generator (Pre-Order Experiment)

Scenario: Used before Case 3 to generate a starting point for the essay topic.

Input: Artificial Intelligence in Education. Typical outputs: "The Impact of AI on Modern Education," "Advantages and Disadvantages of AI in Learning."

What I tested: Used one generated title as-is in a draft prompt (control test), then compared it with a manually defined thesis-based title.

Observed difference: Generated title led to generic "pros/cons" structure. Manual thesis led to structured argument (support vs replacement). The tool does not guide thinking — it reinforces the most common structure.

Practical use: Good for brainstorming. Weak for defining academic direction.

Plagiarism Checker (Post-Draft Validation)

Scenario: Applied to Case 3 draft before revision.

What I tested: Ran full draft through checker, then compared result with manual source inspection.

Observed behavior: No clear percentage breakdown. No detailed source mapping. No distinction between citation overlap vs real duplication.

Result: Confirmed the text was "original enough." Did not help evaluate source quality or argument integrity. The tool answers "Is this copied?" but not "Is this academically strong?"

Practical use: Useful as a quick safety check. Not useful for quality control.

Tool Impact vs Writing Impact

| Tool | Real Impact on Outcome | Best Use Case |

|---|---|---|

| Title Generator | Low | Idea starting point only |

| Plagiarism Checker | Low | Final safety check |

| Revision System | High (conditional) | Structured improvement process |

Final Insight

Tools support the workflow, but they do not replace thinking, structure, or academic judgment. Across all three cases, none of the tools independently improved the quality of the paper.

The only factor that consistently increased quality was: clear instructions, strong thesis control, and active communication with the writer. Everything else — including tools — played a secondary role.

FAQ

Can the same writer deliver both weak and strong papers?

Yes. The difference is not always in the writer's ability, but in how clearly the task is defined. In testing, the same level of writer produced significantly different results depending on how much structure was provided before writing began.

How much control should you keep after placing an order?

More than most users expect. Minimal involvement leads to generic results, while short but targeted interaction (10–20 minutes total) can significantly improve structure, argument clarity, and final usability.

Is it better to choose the highest-rated writer?

Not necessarily. Ratings reflect consistency, but not thinking style. The most reliable signal during testing was whether the writer asked clarifying questions or restated the argument before starting.

Do longer deadlines actually improve quality?

Yes, but indirectly. Longer deadlines do not automatically make the writing better — they allow time for clarification, revision, and iteration, which is where most quality improvements actually happen.

Can you rely on the first draft without revision?

In most cases, no. Even strong drafts benefit from at least one revision pass. The first version tends to establish structure, while the revision phase is where precision and clarity are added.